Enterprise chargeback - a better quality of life for operations

We left off with a laptop and a database serving as a bare-minimum proof of concept. It works, but not enough to be usable. Starting a project from scratch doesn’t have to be toil . Moving this into “real infrastructure” gave me an opportunity to implement quality of life improvements.

Let’s do databases better this time

By this point, the project had expanded beyond one person’s exploration and into multiple folks collaborating asynchronously, as time allows. This made the old model of SSH’ing into a database server and running SQL scripts manually dangerous - both for granting so much access and the ability to easily mess up months of data.

To fix this problem, we added two more technologies to the stack. The first was FlywayDB to provide version-control for all of our SQL scripts, schemas, migrations, and more. The second was a testing workflow in our project’s CI system (GitHub Actions) to test these on proposed changes with a small set of sample data. Testing at each pull request saved the project several times later on. Once merged, it could then automatically promote changes into the new production server.

Schema as Code

Database schema as code tools, like Flyway, work by storing information in a git repository. While each has a specific layout, there’s some common patterns.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

.

├── .github/

│ └── workflows/

│ └── test-db.yml # pull request checks

├── app/

│ └── application goes here

└── sql/

├── migrations/

│ ├── repeatable/ # schema applied in any order, after versioned migrations

│ │ ├── R__seed-data.sql

├── R__normalize-data.sql # stored procedures go here too

└── ... etc ...

│ └── versioned/ # apply these schema in order

│ ├── V001__init.sql

│ ├── V002__add-jira.sql

│ └── ... etc ...

├── queries/ # queries can be stored here too!

│ ├── generate-report.sql

│ ├── name-changes.sql

│ └── ... etc ...

├── db.env # not the real data, just for ephemeral testing

└── flyway.conf # configuration files

It took a little bit to get used to not just SSH’ing in and running commands or just opening a database admin GUI. The initial inconvenience was annoying, but this way of managing databases quickly became something the project couldn’t live without. It allowed us to

- Enforce code review through pull requests. It was once normal to “it’s just a small change” and run it directly. Asking for a second set of eyes didn’t cause a huge delay, but did catch a few terribly inefficient SQL queries.

- Test each change with sample data. Getting representative sample data isn’t straightforward, but worth investing in. Each new query, edge case that caused a bug, stored procedure, and more should have enough data to test validity. I didn’t bother with having enough fake data for performance optimizing, as even fake data has real costs.

- Proving changes. Having immediately-accessible, tamper-evident proof of who did what when, where and why made audits and dispute resolution much simpler.

Re-orgs are constant

The “changes are easy to audit” part came up almost immediately in the annual re-organization. Businesses restructure who reports to whom, how time gets charged, and more all the time. The first set of changes were for a re-organization. Proving it came directly from the HR system of record when they were made was how the first billing disputes got resolved.

Since this system handled how employee is assigned licensed software to use, knowing who they report to and where their time is billed is surprisingly difficult beyond a few hundred people. HR information systems are notoriously unkind to work with, but this is the most critical part of the system.

Now do it every month

Putting this into an automation platform that can audit each run was the next step. It has to run on X day of the month or every Monday … or however works best for your company. Rundeck was a hot new choice for this problem at the time. 😅

Whatever it is you build on, it must support

- Audit each run of a job

- Audit changes to a job

- Forward audits to an external logging system

- Role-based access control, such that some people can see jobs and others can stop/start them

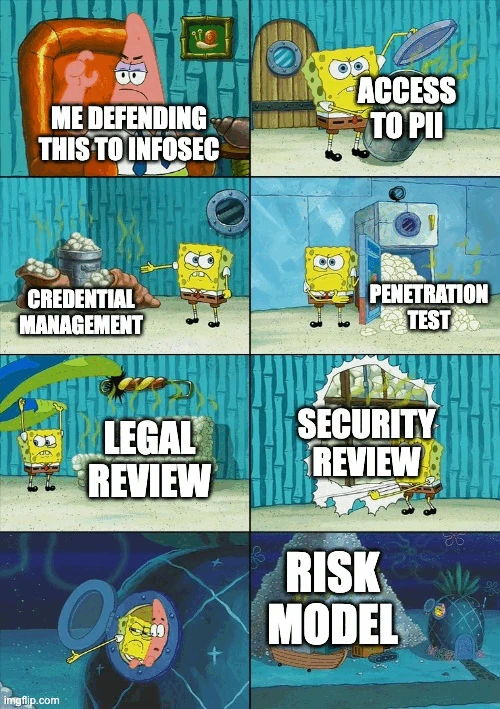

People process is the hardest part

This was the spot that broke me as an engineer. No problem that delayed this project for the next few months was a technical problem. Instead, it was all fixes on a series of things I should have considered from the moment there was a glimmer of value in this data and didn’t think to design for.

Perhaps the moment “junior” becomes “senior” engineer is buried somewhere here. Between all the stakeholders that suddenly appeared, lots of good was implemented.

Excuse me, you have credentials to HOW MANY firm-wide systems?

Okay, we’ll implement grown-up credential management with audited and regular rotation in a secret manager.

What’s the risk model?

Infosec asked “why didn’t you start here?” and I don’t have a good answer to start with. It could be a very nice “crown jewel” to control access to, though.

Did Legal approve this?

Even without access to “protected” PII like date of birth or race, Legal needs to know this data exists. And how it’s stored. And who has access to it. 🫠

What did security say?

The first iterations crashed whenever Nessus scanned it. These were simple fixes. Then, the next few gave me a handful of more challenging problems to fix, mostly in identity and access control. Then the internal threat team phished some guy in the NOC and now they have the latest data scrape with names, unique employee IDs, and which services they use.

Having a safe place to screw up was critically important to actually fixing stuff and learning to do better.

Executives only want a dashboard

After all of the schema migrations and getting folks aligned on changing their tools, the credential rotation, the legal review, the internal phishing incident - the only deliverable that mattered to the people signing off on the project was …

… a spreadsheet attachment in a monthly email. That’s it.

There was a ton more value to find in this data, though.

Next - find some unexpected business value and other paths to explore, even when everyone has an opinion on this new source of data.